FAQ

What does locally hosted AI mean?

Locally hosted AI runs AI models on hardware you own, inside your network, with your data never leaving your environment. The model processes prompts on your server, not a cloud vendor's. You pay for hardware once instead of per API call.

Is locally hosted AI cheaper than cloud AI?

Over 12-18 months, yes, for most real workloads. Cloud AI has a low entry price but per-call pricing compounds with usage. Their Prices will increase in future. Local deployment has higher upfront hardware cost but flat ongoing cost. The crossover point for most SMEs is a few hundred thousand tokens per day.

Does local AI deployment help with PDPA compliance?

Yes, significantly. Keeping data inside your network removes third-party data transfer concerns, simplifies audit trails and eliminates cross-border data flow notifications. For regulated industries (finance, healthcare, legal), local deployment often turns compliance from a blocker into a non-issue.

What hardware do I need for local AI?

For small teams up to 20 users, a workstation with a mid-range or high-end GPU (RTX 4090 or similar) handles most workloads. For SMEs with 50-100 users, a dedicated server with 2 GPUs (NVIDIA 5090, AMD 9070XT). For 100+ users or heavy concurrent use, you'll need multiple servers. We size the exact hardware based on your user count and workload shape. Drop us an email for more information.

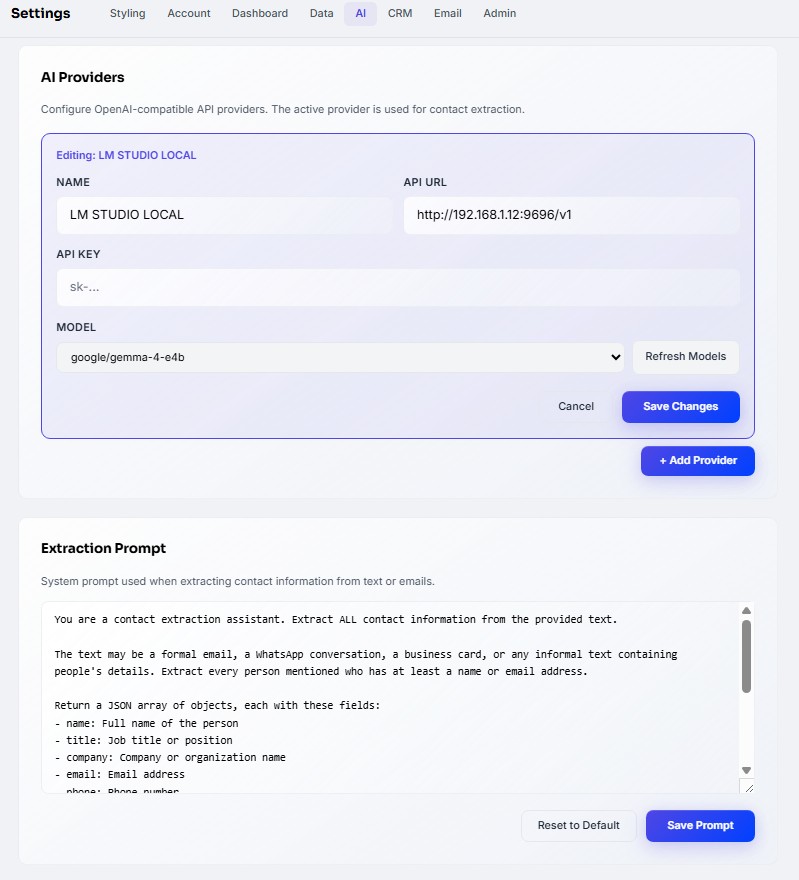

Which AI models can run locally?

Most major open-weight LLMs: Mistral Large and Mistral Small, Qwen 3.5, Gemma 4, Zai 4.7 Flash. For specialist tasks: ComfyUI for image generation, embedding models for retrieval. Quality is competitive with cloud models for most business use cases.

How long does a local AI deployment take?

Standard SME deployments ship in 4-8 weeks from first call to production. This covers scoping, hardware procurement, installation, model selection, integration with existing tools and team training. Larger enterprise deployments with fine-tuning take 8-16 weeks.

What are local AI deployment downsides?

Higher upfront cost, hardware has to be sized upfront and you own the maintenance. For very low-volume use, cloud APIs are still cheaper. For teams with zero IT function, cloud is easier even if more expensive.