Frequently Asked Questions

What is ComfyUI?

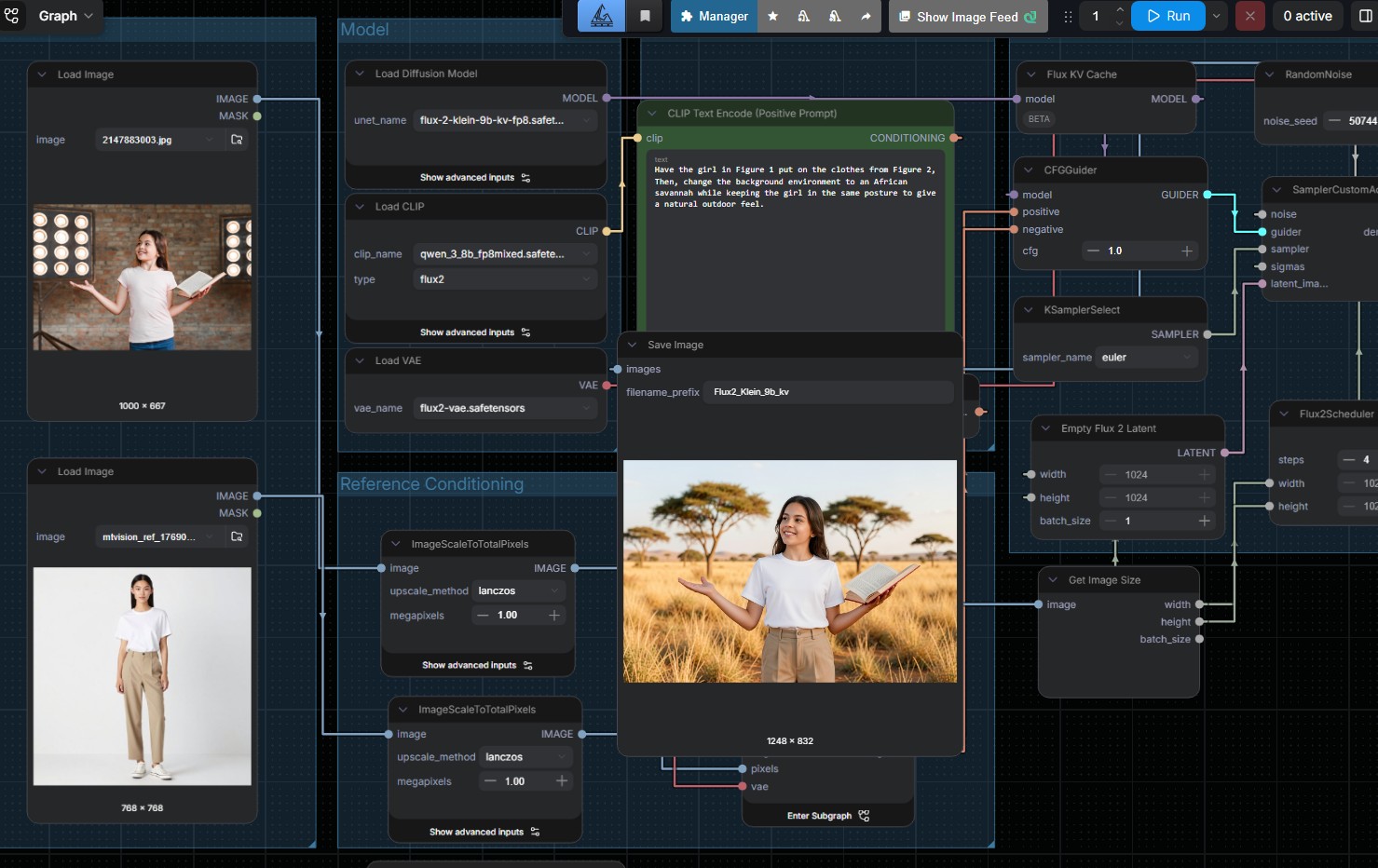

ComfyUI is an open-source graphical interface for running AI image generation models locally on your own hardware. Instead of typing prompts into a chatbot, you build image generation workflows visually by connecting nodes. It runs offline, costs nothing per image after setup and keeps your creative assets on your infrastructure.

Is ComfyUI free?

Yes. ComfyUI itself is free open-source software and the underlying models (Qwen, Z-Image, Flux) are free open-weight models. Your only cost is hardware and the engineer time to set it up. No per-image fees, no subscription.

What hardware does ComfyUI need?

For small creative teams, a basic spec with an RTX 4070 and 32GB RAM is the minimum. For studios or agencies with multiple concurrent users, a RTX 5090 with 64GB RAM will be recommended. We also include hardware builds.

ComfyUI vs Nanobanana, Freepik, Leonardo, Midjourney: which is better for business?

For volume or IP-sensitive work, ComfyUI is better because there are no per-image fees, no confidentiality concerns with generated content and full control over the generation pipeline. Cloud Apps is easier for occasional personal or exploratory use. For teams producing hundreds of images per week, ComfyUI pays off quickly.

Can I use ComfyUI-generated images commercially?

Yes, commercial use of open-sourced Check specific model licences for any fine-tunes you use. Because generation runs on your hardware and the source prompt is yours, the output belongs to you.

Is ComfyUI hard to learn?

There is a learning curve. Node-based interfaces reward patience. A designer used to a Freepik prompt box will need a few hours to get comfortable. Once past the learning curve, the productivity gap reverses because you can save workflows, reproduce outputs exactly and tune parts of the pipeline that prompt-only tools do not expose.

Can ComfyUI integrate with our existing content workflow?

Yes. ComfyUI exposes an API so it plugs into automated pipelines, content management systems and agentic workflows. Our AgentsCommand platform can drive ComfyUI as part of a multi-agent content production flow, so you can go from brief to finished visual through one automated pipeline.